The Visual Similarity of Movie Posters

Recently this past winter my friends and I were trying to find something to watch on one of the major video streaming services and as as usual, we were drowning in decision paralysis. Page after page, and still nothing. After a certain point, all the movie covers began to blend together at which point we gave up. This got me thinking on the visual similarity of movie posters and the seemingly unoriginal designs most of them have…

After doing a little research, it turns out that others have also noticed this pattern of visual similarity.1,2,3 Within a short amount of time, I was able to come across dozens of common tropes movie studios have been using for their movie covers. Because all of the existing analysis had been manually completed, I began wondering if there was a way to automate that process and potentially find new visual relationships that hadn’t been noticed yet. Luckily, thanks to deep learning, this is a fairly trivial task.

Scraping the movie covers from the internet yielded just over 169k images, of which 2636 were identical duplicates and thus removed. From here, the pool of images was reduced by an additional ~5k because they were boring, algorithmically generated images. Utilizing TensorFlow and the inceptionV34 architecture pretrained on the ImageNet dataset, I pulled network values from the second to last fully connected layer. This resulted in a 2048x1 vector which was then reduced down to a 2x1 using t-SNE.5 Finally, the image vectors were translated from t-SNE space into a structured grid space via combinatorial optimization by solving the assignment problem. The results as seen below, were then tiled and served through Leaflet. Make sure you click the full screen option!

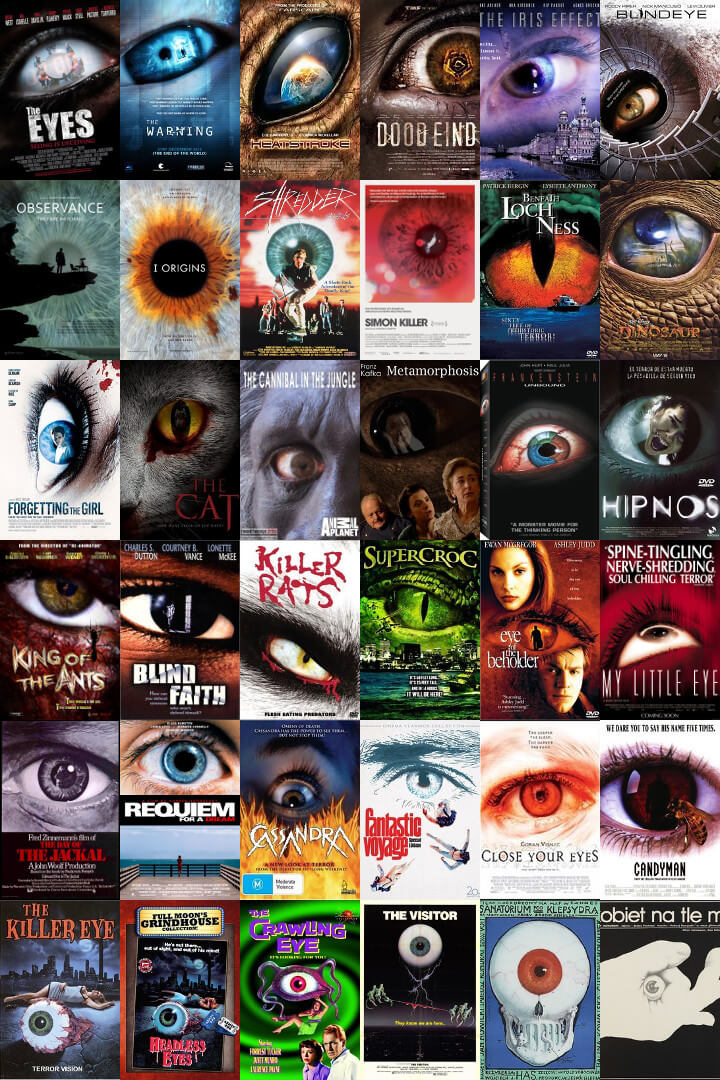

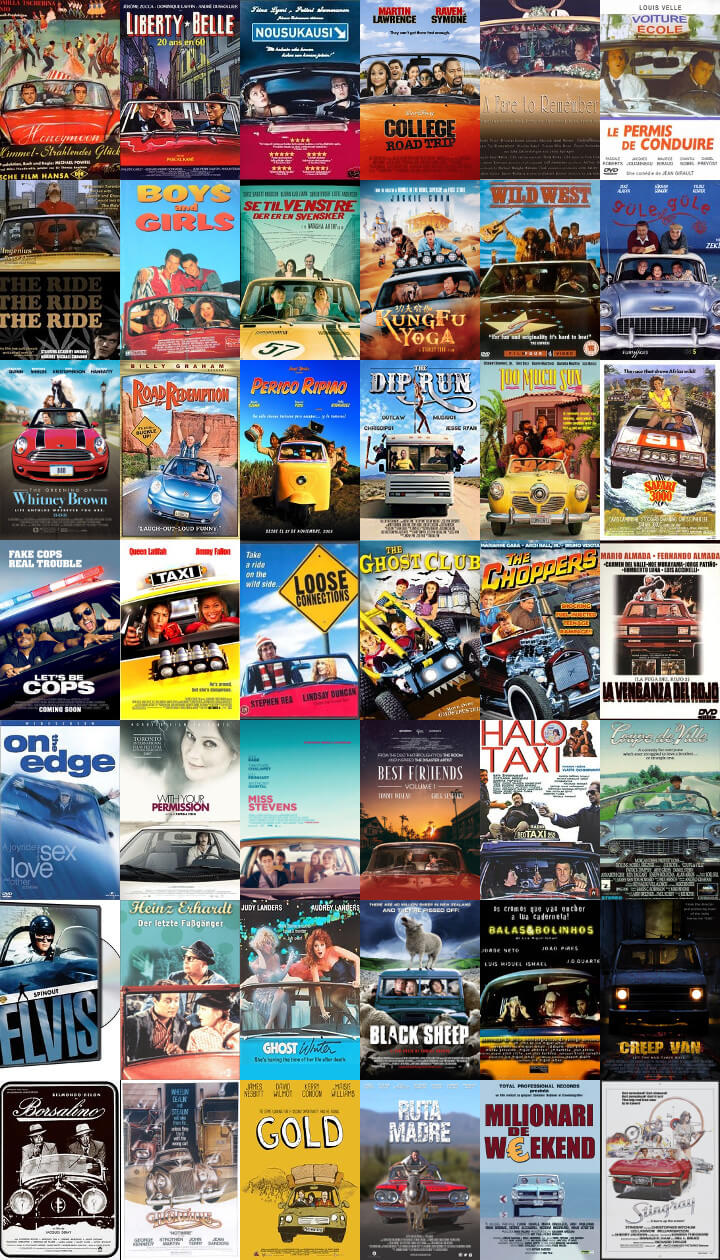

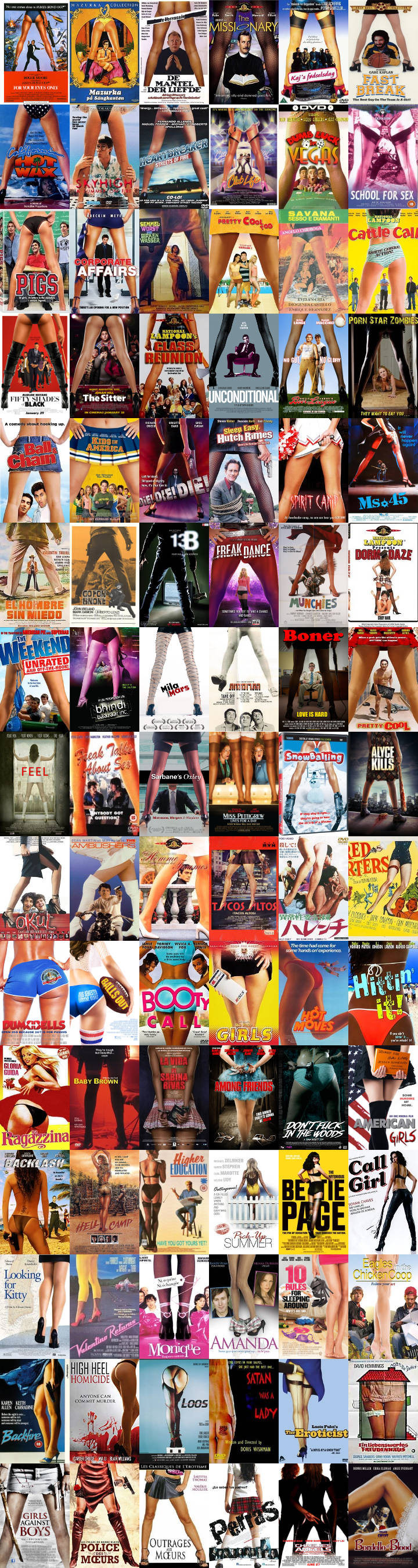

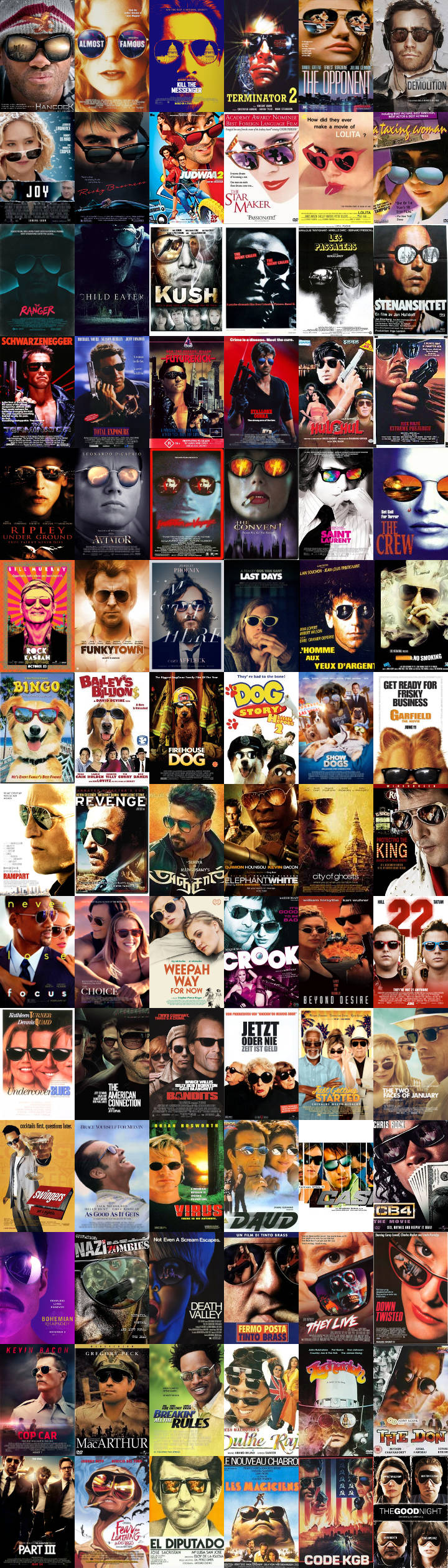

Like the architecture of most neural networks tasked with image classification, the InceptionV3 is based around the convolution. Because of this, edges become the areas of interest within an image. While exploring the landscape, it’s interesting to note how sometimes the network is able to nail similarity while other times it’s questionable at best. This is partly because what we as humans define as similar can be quite different from what the network considers as similar. It’s also partly due to the fact that most movie posters are feature rich and t-SNE treats all positions within the feature vector fairly equal (i.e. trees == people == boats). Because of this, a movie poster with a forest and a single person is roughly equal to a poster with a couple of trees and multiple people. Regardless, the network is able to produce some pretty interesting clusters. These clusters were captured using DBSCAN while in t-SNE space and lightly trimmed down with some manual input. Below are a small sample of these clusters.

The clusters above are amongst the most interesting and easily identifiable in terms of a common theme or pattern. Coincidently, some are also near duplicates of previously generated clusters.6 Many of the clusters produced by DBSCAN however involved similar color schemes, or less obvious patterns of contrast yielding much less interesting visuals.

Overall, I had no idea how similar a lot movie posters are but after completing this it has become fairly obvious. As an example, some of the largest clusters (sunglasses, automotive, horses, motorcycles) total close to a thousand…

Sidney Fussell, Hollywood keeps using these 13 movie poster clichés over and over again (May 19, 2016) ↩︎

Christophe Courtois, Question difficile : de quelle couleur sont les robes dans les films? (August 21, 2011) ↩︎

Christophe Courtois, Les affiches de films… de dos (June 21, 2011) ↩︎

C. Szegedy, V. Vanhoucke, S. Ioffe, J. Shlens, and Z. Wojna, Rethinking the Inception Architecture for Computer Vision (Proceedings of the IEEE conference on computer vision and pattern recognition. 2016) ↩︎

L. Maaten, G. Hinton, Visualizing Data using t-SNE (Journal of Machine Learning Research 9, 2008) ↩︎

Christophe Courtois, Ces affiches me sortent par les yeux! (June 17, 2011) ↩︎